top of page

Search

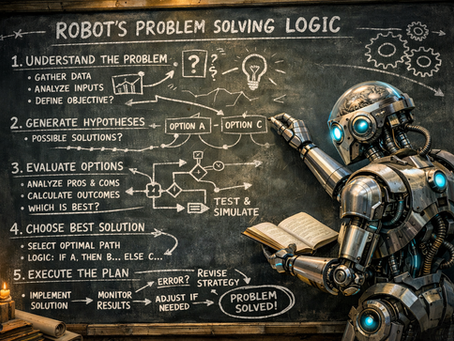

How Language Models Learned to Reason

The article explores the paper Chain-of-Thought Prompting Elicits Reasoning in Large Language Models, showing that large language models can perform complex reasoning when prompted to generate intermediate reasoning steps in natural language. By providing examples with explicit “chains of thought,” models learn to decompose problems and significantly improve performance on arithmetic, commonsense, and symbolic reasoning tasks—without fine-tuning or architectural changes.

Juan Manuel Ortiz de Zarate

Feb 1510 min read

bottom of page